Last week, I happened to glance at the codebase for rust-analyzer. I realized that r-a and Krabby (my very-very-WIP Rust compiler) have a lot in common; r-a re-implements a lot of rustc and has benchmarks and tests to compare it to rustc. While Krabby has different high-level goals, it needs exactly the same infrastructure.

One component that stuck out to me was macro expansion. I’ve previously heard that r-a puts a lot of effort into macro expansion, partly to offer interesting LSP functionality (e.g. “expand this macro”) and partly because macros complicate normal LSP functionality (e.g. “goto definition”, if the definition is generated by a macro). While r-a sometimes re-uses code from rustc, a quick peek around the codebase revealed that most of r-a’s macro handling code is written from scratch. It also appears to be much simpler; I’m not sure whether that’s because it has been written more concisely (with hindsight from rustc), because it is less concerned with diagnostics, or because it differs from rustc in some edge cases.

Declarative macro expansion (e.g. foo!(a, b)) has two obvious steps: you need to match the input (a, b) against the match arms in foo (“parsing”); then you need to compute the output by filling in meta-variables in the match arm body (“transcribing”). r-a’s mbe/expander/matcher.rs, which implements the parsing step, has a top-level comment referencing rustc’s mbe/macro_parser.rs. It appears they both use the same algorithm, although I have not fully understood their implementations.

The explanation of the algorithm (from mbe/macro_parser.rs) is really interesting:

Quick intro to how the parser works:

A “matcher position” (a.k.a. “position” or “mp”) is a dot in the middle of a matcher, usually written as a

·. For example· a $( a )* a bis one, as isa $( · a )* a b.The parser walks through the input a token at a time, maintaining a list of threads consistent with the current position in the input string:

cur_mps.As it processes them, it fills up

eof_mpswith threads that would be valid if the macro invocation is now over,bb_mpswith threads that are waiting on a Rust non-terminal like$e:expr, andnext_mpswith threads that are waiting on a particular token. Most of the logic concerns moving the · through the repetitions indicated by Kleene stars. The rules for moving the · without consuming any input are called epsilon transitions. It only advances or calls out to the real Rust parser when nocur_mpsthreads remain.Example:

Start parsing a a a a b against [· a $( a )* a b].

Remaining input: a a a a b

next: [· a $( a )* a b]

- - - Advance over an a. - - -

Remaining input: a a a b

cur: [a · $( a )* a b]

Descend/Skip (first position).

next: [a $( · a )* a b] [a $( a )* · a b].

- - - Advance over an a. - - -

Remaining input: a a b

cur: [a $( a · )* a b] [a $( a )* a · b]

Follow epsilon transition: Finish/Repeat (first position)

next: [a $( a )* · a b] [a $( · a )* a b] [a $( a )* a · b]

- - - Advance over an a. - - - (this looks exactly like the last step)

Remaining input: a b

cur: [a $( a · )* a b] [a $( a )* a · b]

Follow epsilon transition: Finish/Repeat (first position)

next: [a $( a )* · a b] [a $( · a )* a b] [a $( a )* a · b]

- - - Advance over an a. - - - (this looks exactly like the last step)

Remaining input: b

cur: [a $( a · )* a b] [a $( a )* a · b]

Follow epsilon transition: Finish/Repeat (first position)

next: [a $( a )* · a b] [a $( · a )* a b] [a $( a )* a · b]

- - - Advance over a b. - - -

Remaining input: ''

eof: [a $( a )* a b ·]

To me, this looks like a BFS; every possible parse is evaluated simultaneously, over a single pass through the input tokens. My first thought was that a DFS would be better here; it would cover the same ground but require less memory and probably play better with the CPU. Some prior experience with parsing algorithms (I think memories of packrat parsing) reminded me that a DFS might require caching meta-variables; if the macro requires a Rust expression to be parsed (e.g. $a:expr), we might cache the result for later in the DFS traversal.

The cache sounds relatively simple to implement; you’d keep a hash table mapping “parse an expression/statement/etc. at position X” to its result. Every time a meta-variable needs to be matched at some position Y, the hash table would be checked first; on a miss, it would be computed from scratch. The size of the table is bounded quite well. But I worried about the soundness of this approach; what if the length of the input mattered too? If one arm of a macro required ($e:expr), and the other ($e:expr + 1) (at the same position), a cached result from the first parse could not be used for the second one.

This case is sound; Rust prohibits meta-variables from being followed by tokens that they could consume. But ambiguity is a problem. In rustc’s matcher code, before the explanation of the algorithm, Earley parsers are mentioned — specifically “We don’t say this parser uses the Earley algorithm, because it’s unnecessarily inaccurate.” But the algorithm is a subset of an Earley parser, and as the Wikipedia page mentions, Earley parsers have a quadratic runtime … for unambiguous grammars. For ambiguous grammars, Earley parsers have cubic runtime. Maybe we can force such behaviour out of rustc…

Around this time, I was talking to jyn, and she linked me to an existing instance of degenerate behaviour in macro expansion. So degenerate cases can occur! Curious whether there were more, I decided to look into ambiguity further.

Rust macros can match meta-variables with repetition, e.g. $($e:expr),*. This pattern would match a comma-separated sequence of expressions. Unlike (most?) regexes, repetitions in macros are not deterministic; they can match fewer times if needed. The following example compiles successfully:

macro_rules! foo {

( $( @ )+ ) => {};

}

macro_rules! bar {

( $( @ )+ @ ) => {};

}

foo!(@ @ @ @); // parsed as '(@ @ @ @)'

bar!(@ @ @ @); // parsed as '(@ @ @) @'But there are cases of ambiguity here. If we put two such repetitions next to each other, they could overlap. In fact, we could repeat a repetition! Take a look:

macro_rules! foo {

( $( @ )+ $( @ )+ ) => {};

}

macro_rules! bar {

( $( $( @ )+ )+ ) => {};

}

foo!(@ @ @ @); // error: ambiguity: multiple successful parses

bar!(@ @ @ @); // error: ambiguity: multiple successful parsesHmm… so what are the successful parses here? Let’s enumerate them:

Valid parses for `foo!(@ @ @ @)`:

- (@ @ @) (@)

- (@ @) (@ @)

- (@ @) (@) (@)

- (@) (@ @ @)

Valid parses for `bar!(@ @ @ @)`:

- (@ @ @ @)

- (@ @ @) (@)

- (@ @) (@ @)

- (@ @) (@) (@)

- (@) (@ @ @)

- (@) (@ @) (@)

- (@) (@) (@ @)

- (@) (@) (@) (@)In bar, the number of valid parses grows quadratically with the size of the input. If there was one more token, the matcher code would try to extend all of these valid parses, and hence we find the cubic growth mentioned on Wikipedia. But maybe we’re okay — such ambiguity raised an error, after all. It could never happen in valid Rust code. Right? …right?

Unfortunately, we have not reached the end of this blog post. Using its tracking of all valid parses, rustc checks that the end result is unambiguous, so this example does not work. But it only checks this on every macro invocation; rustc does not have a problem with our ambiguous macro on its own. This makes sense; it is impossible to identify ambiguous grammars. Let’s pull one final trick and build an ambiguous grammar that is unambiguous in a single case:

macro_rules! parse {

// 'rustc' complains if I don't put an '@' in the outer

// repetition, but it doesn't affect our analysis.

( $( $( @ )+ @ )+ @ @ ) => {};

// ^^^ n-2 tokens

}

parse!(@ @ @ @);

// ^^^^^^^ n tokensThis successfully compiles. The only valid parse is the minimal one, where the repetitions only occur once. But rustc’s intermediate processing here involves cubic growth; you just need to change the number of @ tokens (n tokens in the invocation and n-2 at the end of the match arm). Is it time for a graph?

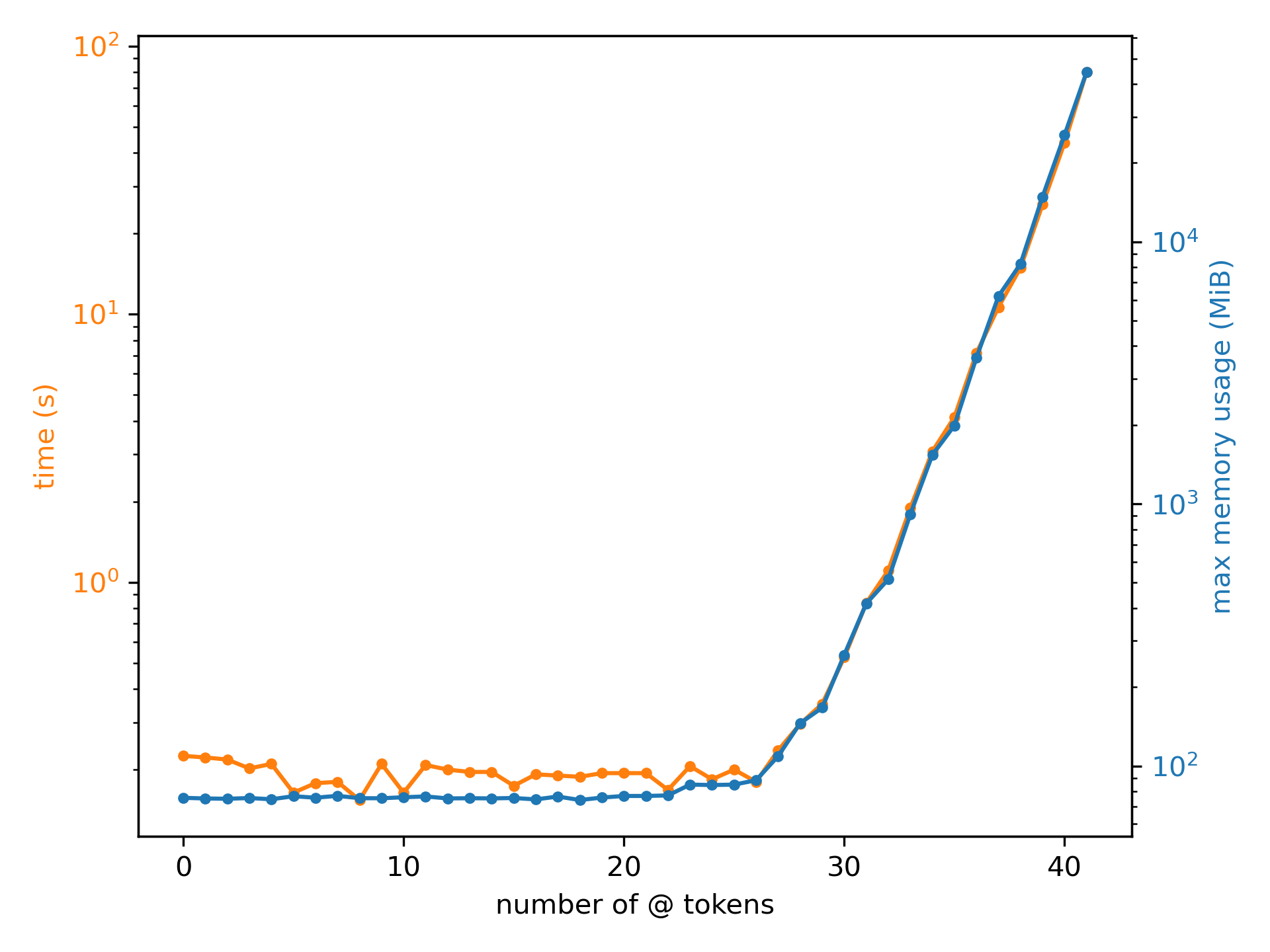

I wrote a proof of concept that produces this Rust code given a number of @ tokens and uses cargo -Zscript to execute the file directly. I captured the runtime and maximum memory usage using /usr/bin/time, running each sample 5 times (while preventing caching!) and averaging the results. I could only get to

-f '%e %M'n = 41; you can see why:

I was expecting cubic growth, but this looks concerningly exponential (note that both time and memory usage are plotted on a log scale). If there’s a competition for the shortest snippet of valid Rust code that borks rustc, I hope this is a good submission. Note that we’re only parsing fixed @ tokens; you could worsen the situation (presumably by a constant factor?) by parsing complex meta-variables like $:expr.

what now?

It turns out that an Earley parsing style is completely unnecessary here. I mentioned before that determining whether a grammar is ambiguous is undecidable; but that is specifically for context-free grammars (CFGs). CFGs (but usually their well-ordered variants, PEGs) are commonly used for defining programming language syntax, because they allow recursion. But macro match arms are not recursive; they can use (unordered) repetition, sure, but that is much more limited than real recursion. This is somewhat noticeable in the rustc code’s description: it references progress through the match arm as a single integer position. Progress through a general CFG/PEG can’t be expressed that way; it would require a stack.

While statically detecting ambiguity for macro match arms might be decidable, there’s no real point to it because rustc allows it today. I’m sure there are plenty of macros in real-world code that are slightly ambiguous, so disallowing it would be frustrating for everyone. But we can optimize the parsing of macro invocations, both in detecting ambiguity and handling semi-ambiguous grammars.

A simple variation on a packrat parser would allow us to parse macro match arms while detecting ambiguity, in guaranteed-linear time. Let’s phrase our parser’s steps as “try to parse input[tpos..] with arm[mpos..]”, where input is the macro invocation input and arm is the pattern specified by the match arm. A step can succeed (with meta-variable results), fail, or detect ambiguity. Repetitions like $(<iter>)* <rest> are handled by attempting two parses (for <iter> <rest> and <rest>) at the current position. If either attempt succeeds, its result is forwarded; if both succeed, ambiguity is detected. Steps are memoized because they could be reached multiple times due to repetitions.

Even if most macros out there are not ambiguous, they use repetitions and so will suffer in rustc’s current implementation. The Wikipedia page mentions that Earley parsing is linear-time for deterministic grammars; but macro repetitions are not deterministic! Any macro using repetitions can encounter quadratic behaviour today. The packrat-based approach I described above is guaranteed to run in linear-time for all inputs; we memoize steps, and there are only input.len() * arm.len() possible steps.

I’ve tried to avoid contributing to rustc because I’m worried I’ll get sucked into the codebase, but this issue is concerningly fun. I may be quite busy right now, but I’m going to look into implementing this, validating my hypotheses, and maybe opening a PR.